In this post, I talk about my recent experience with the Microsoft AI-900 certification and the resources I used to study for it.

Table of Contents

Introduction

On 04 November 2022, I earned the Microsoft Certified Azure AI Fundamentals certification. I’ve had my eye on the AI-900 since passing the SC-900 over Summer. Last month I found the time to sit down with it properly! This is my fourth Microsoft certification, joining my other badges on Credly.

Firstly, I’ll talk about my motivation for studying for the Microsoft AI-900. Then I’ll talk about the resources I used and how they fitted into my learning plan.

Motivation

In this section, I’ll talk about my reasons for studying for the Microsoft AI-900.

Increased Effectiveness

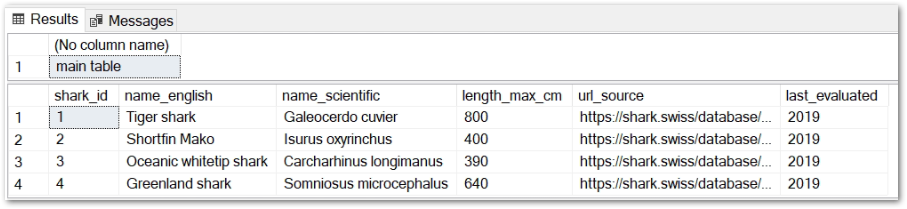

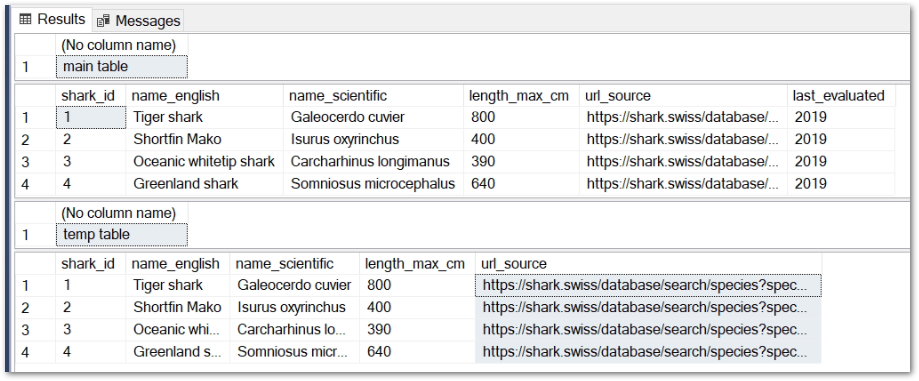

A common Data Engineering task is extracting data. This usually involves structured data, which have well-defined data models that help to organise and map the data available.

Sources of structured data include:

- CSV data extracts.

- Excel spreadsheets.

- SQL database tables.

Increasingly, insights are being sought from unstructured data. This is harder to extract, as unstructured data aren’t arranged according to preset data models or schemas.

Examples of unstructured data sources include:

- Inbound correspondence.

- Recorded calls.

- Social media activity.

Historically, extracting unstructured data needed special equipment, complex software and dedicated personnel. In recent years, public cloud providers have produced Artificial Intelligence and Machine Learning services aimed at quickly and easily extracting unstructured data.

In the case of Microsoft Azure, these include:

- Azure Form Recognizer for correspondence analysis.

- Speech to Text for transcribing phone calls.

- Azure Cognitive Service for Language for social media analysis.

Knowing that these tools exist and understanding their use cases will help me create future data pipelines and ETL processes for unstructured data sources. This will add value to the data and will make me a more effective Data Engineer.

And on that note…

Skill Diversification

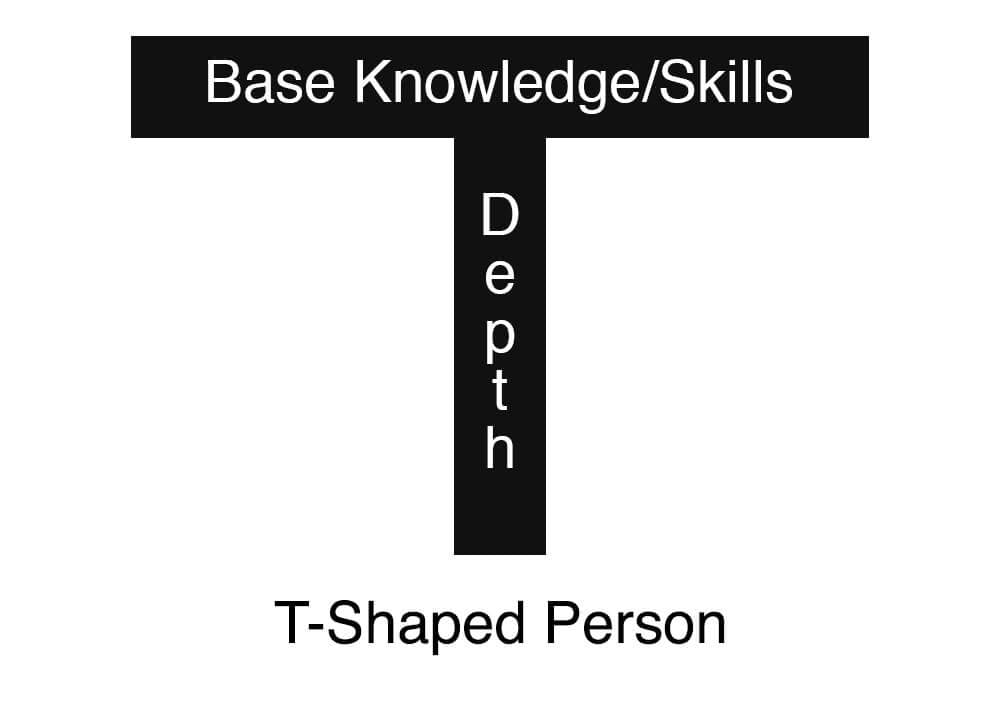

Recently I was introduced to the idea of T-shaped skills in a CollegeInfoGeek article by Ransom Patterson. Ransom summarises a T-shaped person as having:

…deep knowledge/skills in one area and a broad base of general supporting knowledge/skills.

“The T-Shaped Person: Building Deep Expertise AND a Wide Knowledge Base” – Ransom Patterson on CollegeInfoGeek

Ransom’s article made me realise that I’ve been developing T-shaped skills for a while. I’ve then applied these skills back to my Data Engineering role. For example:

- Writing my PowerShell music upload script helped me write scripts that monitor EBS volume snapshots.

- Reading George Pólya’s How To Solve It helped me resolve problems when writing Python data ingestion scripts.

- Learning about DynamoDB for my AWS Developer Associate certification helped me extract data from DynamoDB to Redshift.

My studying for the AI-900 is a continuation of this. This isn’t me saying “I want to be a Machine Learning Engineer now!” This is me seeing a topic, being interested in it and examining how it could be useful for my personal and professional interests.

Multi-Cloud Fluency

This kind of follows on from T-shaped skills.

Earlier in 2022, Forrest Brazeal examined the benefits of multi-cloud fluency, and built a case summarised in one of his tweets:

1. Have a “primary” cloud that feels like your native language.

— Forrest Brazeal (@forrestbrazeal) January 7, 2022

Go deep on your primary cloud for hirable expertise, broad on your “secondary” cloud for situational awareness.

Another way to put this: build on your primary, get certified on your secondary.

This applies to the data world pretty well, as many public cloud services can interact with each other across vendor boundaries.

For example:

- An Amazon Athena connector exists for Microsoft Power BI.

- Azure Data Factory can copy and transform data from Amazon S3.

- GCP BigQuery Omni can access data stored in Azure Blob storage.

With multi-cloud fluency, decisions can be made based on using the best services for the job as opposed to choosing services based on vendor or familiarity alone.

This GuyInACube video gives an example of this using the Microsoft Power BI Service:

To connect the Power BI Service to an AWS data source, a data gateway needs to be running on an EC2 instance to handle authentication. This introduces server costs and network management.

Conversely, data stored in Azure (Azure SQL Database in the video) can be accessed by other Azure services with a single click. As a multi-cloud fluent Data Engineer in this scenario, I now have options where previously there was only one choice.

Improved multi-cloud fluency means I can use AWS for some jobs and Azure for others, in the same way that I use Windows for some jobs and Linux for others. It’s about having the knowledge and skills to choose the best tools for the job.

Resources

In this section, I’ll talk about the resources I used to study for the Microsoft AI-900.

John Savill

John Savill’s Technical Training YouTube channel started in 2008. Since then he’s created a wide range of videos from deep dives to weekly updates. In addition, he has numerous playlists for many Microsoft certifications including the AI-900.

Having watched John’s SC-900 video I knew I was in good hands. John has a talent for simple, straightforward discussions of important topics. His AI-900 video was the first resource I used when starting to study, and the last resource I used before taking the exam.

Exceptional work as usual John!

Microsoft Learn

Microsoft Learn was my main study resource for the AI-900. It has a lot going for it! The content is up to date, the structure makes it easy to dip in and out and the knowledge checks and XP system keep the momentum up.

To start, I attended one of Microsoft’s Virtual Training Days. The courses are free, and their AI Fundaments course currently provides a free certification voucher when finished. Microsoft Product Manager Loraine Lawrence presented the course and it was a great introduction to the various Azure AI services.

Complimenting this, Microsoft Learn has a free learning path with six modules tailed for the AI-900 exam. These modules are well-organised and communicate important knowledge without being too complex.

The modules include supporting labs for learning reinforcement. The labs are well documented and use the Azure Portal, Azure Cloud Shell and Git to build skills and real experience.

I didn’t end up using the labs due to time constraints, but someone else had me covered on that front…

Andrew Brown

Andrew Brown is the CEO of ExamPro. He has numerous freeCodeCamp videos, including his free AI-900 one.

I’ve used some of Andrew’s AWS resources before and found this to be of his usual high standard. The video is four hours long, with dozens of small lectures that are time-stamped in the video description. This made it easy to replay sections during my studies.

Andrew also includes two hours of him using Azure services like Computer Vision, Form Recognizer and QnAMaker. This partnered with the Microsoft Learn material very well and helped me understand and visualise topics I wasn’t 100% on.

Summary

In this post, I talked about my recent experience with the Microsoft AI-900 certification and the resources I used to study for it. I can definitely use the skills I’ve picked up moving forwards, and the certification is some great self-validation!

If this post has been useful, please feel free to follow me on the following platforms for future updates:

Thanks for reading ~~^~~